The Right Amount of Automation

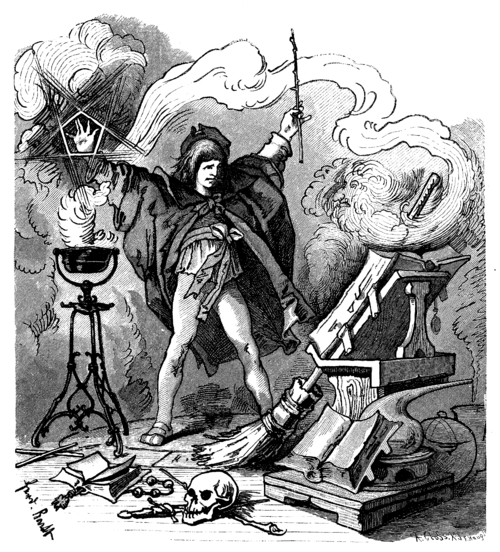

Like many people, I’ve found the move to using AI in my work a little earth-shifting. Listening to a recent episode of The Daily, I realized I wasn’t alone in my journey as a developer through this new world. At first I resisted because AI felt like “too much magic,” and after using AI for a while now I know that my hunch was true. The “magic” seems to be the same as the magic used in “The Sorcerer’s Apprentice” (or the German “Der Zauberlehrling”) wherein a mindless object can do your work to possibly deterious effects.

My relationship with AI has changed, though. Instead of resisting and bristling like I did at first, I’m finding the places where it makes the most sense for me. Working in the robotics industry gave me a healthy level of skepticism. At one point less than a decade ago we were bracing ourselves for a future of self-driving cars whose dream was never fully realized. Working on other robotic systems, I learned that we eventually merge the optimistic (borderline science fiction) with reality and in the end just improving our productivity. This is the idea behind the “co-bot”: a collaborative robot designed to work alongside humans rather than replace them. It’s a concept that has been gaining traction in manufacturing, and it’s a roundabout way of solving the “last mile problem”. That final stretch of a process where automation repeatedly falls short and human judgment has to take over.

The Robot Problem

All of this reminds me of a passage from The Goal by Eliyahu Goldratt. In it, the main character encounters an old professor and mentions that his plant recently installed robots. The question is asked: “Have they increased the productivity at your plant?” The answer soon becomes clear: the automation didn’t actually help the bottom line. The robots were an expense that created a lot of new problems. They didn’t fit into the overall system very well. Eventually they find the place and pace at which they can be effective, but only after working through the various system changes explored throughout the book.

This is where I’m at with AI. We see developers throwing huge problems at AI to solve and eventually winnowing them down into smaller, more focused pieces. Neither approach is effective. If too broad a problem is asked, you often end up with a mess of code. If you are too narrow, you’re micromanaging everything.

The Overtaxed Developer

We have been in an age of asking developers to assume more and more roles. They are now architects, DBAs, QA engineers, and DevOps engineers, all while still writing code. If they are senior enough they become “leads” or some other title that brings a touch of people management. This is all in the name of becoming “full stack” or in the spirit of “shifting left.” And it feels risky and untenable.

Why do we do this? Well, it makes sense for developers to understand what they can about the product, how it works, and how it’s tested. But when you’re juggling too many things you tend to context switch. The way to solve this was through project management: do a spike, write a design, create a test plan, break down the work into tickets, distribute them amongst the team, and be the point of contact for questions. A failure occurs and immediately you are in debug mode.

Now all of this sounds like you are doing this on your own, but in reality you are one of many other developers doing the same thing, and then along comes the initiative to use AI. All of a sudden these various pieces of work become management tasks. These management tasks then get completed like a developer would: set up a few commands, walk away like you’re waiting for a system to compile, briefly read over the results, verify the tests, and ship. The level of investigation and effort becomes proportional to the time you’re given or the developer high you’re currently on from shipping something so quickly.

The end results become a semi-ignorant developer and a PR with code that could have come from a junior developer all the way to a principal engineer, with quality fluctuating between the two. So how do you manage this?

Peak Hype

I believe we are reaching a peak with AI, at least in the development world, which is often a bellwether for the rest of the career world, and will slowly be approaching the “trough of disillusionment.” A lot of the industry is shedding developers in order to save money, thinking AI will fill those gaps. Even a parent I know who works at a consulting firm gave me some advice the other day. “I hope you know how to code AI agents,” she said, going on to explain that they are cutting jobs. What tumbled from my mouth was this:

“Let me know when your company is hiring again when they realize they won’t get the productivity they were expecting from AI.” This wasn’t a nihilistic view, nor one that was jaded, but one that comes from thinking about this scenario for quite a while. And again to quote The Goal: “If you’re like nearly everybody else in this world, you’ve accepted so many things without question that you’re not really thinking at all.”

So with such an arrogant statement I should probably back this up. Reaching back to my robotics days, the notion of a “human in the loop” seems to be necessary, and AI is just a software version of a robot in my opinion. Productivity, as The Goal defines it, is “the act of bringing a company closer to the goal. Every action that brings the company closer to the goal is productive. Every action that does not bring the company closer to its goal is not productive.” Where “the goal” is to make money. Robots and AI don’t make money. Products that sell do. They can do things faster and sometimes more efficiently, but they can only go as fast as the system they are in. Eventually they hit a bottleneck and work backs up. This work in progress (WIP) is money stuck in the system.

The true place for AI is within the structure of a larger system designed and built for developers and the team as a whole, not as a wholesale replacement. Developers will need to build tools that help them distill design documents, create tickets, develop architectures, validate using tests, and deploy to production, all with the assistance of AI, but not replacing any of it fully.

Experiments in Progress

I’ve thought a lot about this and am currently running experiments myself. For years I’ve had a backlog of ideas and things I wanted to build, and I thought AI would help propel me to where I wanted to go. And it did… for the first week. I started to realize that even on a greenfield project the AI system would eventually start falling over itself. So I added guardrails I wrote about in Shipping Go, such as unit tests, linting, and the like, hoping they would make AI more efficient. In some cases they did. But what I’ve been finding is that the management aspects are what are missing. I can ask it to do something, but then I need to verify it anyway, which takes time. I’ve ended up in code churn due to poorly planned features, and found that the AI went down a completely wrong path.

This all felt like handing my work and ideas over to junior devs and waiting a week for a demo, only to find out it was way off the mark.

This isn’t a new realization for me. In a talk I gave at Code & Supply in late 2023, Don’t Stop Repeating Yourself, I drew on Frederick Winslow Taylor’s principles of scientific management: replace rule-of-thumb drudgery with studied, repeatable process so that cognitive energy goes toward solving actual problems rather than standing up yet another API endpoint. The OODA loop (Observe, Orient, Decide, Act) is useful here too. Before asking AI to build something, you need to observe the actual problem, orient around the right scope, decide on the task decomposition, and then act. AI is most effective at that act step, but only when the prior three steps were verified by a human. As I said back then: AI will get you 90% of the way there so you can focus on the last 10%. That 10% may not be as exciting as the algorithm you were going to write, but it’s exactly where the real value lives.

So what did I do? I went back to Project Management 101 and created a list of composable tasks, and my bugs went down. I’m now running experiments around this across three personal projects, all of which are experimental and very much works in progress: a personal knowledge management platform, a creative tooling project for my kids, and a domain-specific operations dashboard. Each one is giving me useful signal on what is working and what isn’t. As part of this I’m hoping to build tools that assist in establishing a strong, predictable flow of work through the development process.

The Factory Floor

I used the analogy of CI being a conveyor belt in Shipping Go to describe how code moves through a system. If you can imagine humans monitoring robotic welding arms and analyzing the results of automated laser scanning in the creation of a car, I think you can picture what I believe the ideal development environment looks like. Automation handles the repetitive, measurable work. Humans watch the line, catch what the sensors miss, and make the calls that require judgment. And in reality, this same process will scale to other systems of information work as well.

The apprentice in The Sorcerer’s Apprentice didn’t fail because he used magic. He failed because he walked away. He set something powerful in motion, abdicated his judgment, and let it run unchecked until the room was flooded. The lesson isn’t that magic is dangerous; it’s that presence is non-negotiable. The right amount of automation is whatever amount keeps you in the room, watching the line, ready to make the call the spell can’t make for itself.